Securing Your Velociraptor Deployment

Our team are huge fans of Velociraptor. It's an incredibly powerful tool, for both DFIR and endpoint management. It currently supports Windows, Linux, and Mac endpoints, and BONUS: it's open source!

We use it extensively, and we have also embedded it into our NDR Training!

If you are unfamiliar:

It gives you complete access to every endpoint in your fleet, a library of predefined artifacts (or you can craft your own with VQL), and you can drop to a shell on a box with a single click.

Obviously, a tool this powerful deserves some careful time and attention when it comes to deployment and security.

The developer of the tool, Mike Cohen, has baked in many security features such as SSL on the frontend, the API, and client communications, ACLs, OAuth (which can enforce MFA), and more, to help ensure a proper deployment. However, until recently, certain features were implemented in such a way that assumed the server running it would be publicly accessible on the internet.

We are firm believers in keeping valuable assets as far away from the publicly accessible internet as possible, whenever possible (we hear there are some bad people out there who like to do bad things). In this case, the server resides in a segmented, private subnet, only accessible via a bastion, which requires being on a VPN.

Why is this important, particularly in the case of Velociraptor?

Velociraptor absolutely falls in the "valuable asset" category.

Recent changes to the application now allow you to terminate SSL where you choose, versus only on the Velociraptor server, and with certs of your choosing. Which means your infrastructure can be more secure. Thanks Mike!

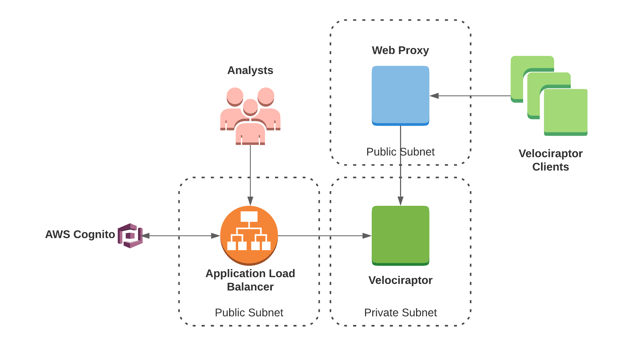

EXAMPLE DEPLOYMENT

In this configuration, the Velociraptor server sits in a private subnet. The load balancer and proxy sit in public subnets.

Traffic between the client(s) and server is encrypted (with either self signed certificates, LetsEncrypt, or some other certificate of your choosing), and configured on the web proxy.

Traffic between the users/analysts and server is encrypted, and in this particular case, the GUI sits behind an identity aware proxy enforcing MFA/SSO.

SERVER CONFIGURATION

These are the pieces of the server configuration you will need to take note of.

The pinned_server_name is configured by default, and needs to be commented out in order for self signed certs/your own domain names to be used.

Client:

server_urls:

- https://velociraptor-frontend/

...

use_self_signed_ssl: true

#pinned_server_name: VelociraptorServer

...

GUI:

bind_address: 0.0.0.0

bind_port: $PORT-OF-YOUR-CHOOSING

use_plain_http: true

...

public_url: https://velociraptor-gui/

...

Frontend:

hostname: $HOSTNAME

bind_address: 0.0.0.0

bind_port: $PORT-OF-YOUR-CHOOSING

use_plain_http: true

CLIENT CONFIGURATION

These are the pieces of the client configuration you will need to take note of.

Again, the pinned_server_name is configured by default, and needs to be commented out in order for self signed certs/your own domain name to be used.

Client:

server_urls:

- https://velociraptor-frontend/

...

use_self_signed_ssl: true

#pinned_server_name: VelociraptorServer

...

DNS CONFIGURATION

In this configuration, you'll need 2 entries:

- One for client communications, the

Frontend, pointing to your web proxy address - One for the web interface, the

GUI, pointing to your ALB/whatever you are putting in front of the web interface

WEB PROXY CONFIGURATION

The web proxy can be any flavor you like, as long as it reverse proxies to the Velociraptor server on the Frontend port.

You'll need to generate certs (self signed or otherwise, DigitalOcean provides excellent write-ups for LetsEncrypt), and ensure your Velociraptor client configuration is pointed to the DNS entry you created for the Frontend.

ALB CONFIGURATION

If you're using AWS and an ALB, or another cloud provider/load balancer, this will need to point to the Velociraptor server on the GUI port. You can configure SSL/certs through your cloud provider, or use a proxy setup similar to the web proxy.

Either will work, but in this example, an ALB allows you to benefit from AWS Cognito/an identity aware proxy sitting in front of the web interface as an additional layer of security.

RENEWING CERTIFICATES

There is still a self signed certificate internal to Velociraptor that it uses for the API and gRPC gateway, which is not especially relevant for deployment purposes, until you need to renew the certificate.

It's configured by default to expire after 1 year, so you will need to regenerate your Velociraptor configuration yearly in order to maintain that internal certificate.

Similarly, if you're using LetsEncrypt or any other certificate on the web proxy, you'll need to set up auto renewals for those as well.

LET'S GO HUNT!

Now that you've got a secure deployment, it's time for the fun stuff. You can pull event logs, download files, deploy agents, perform YARA scans, kick off osquery queries, you name it.

The Windows.KapeFiles.Targets artifact will collect the $MFT, event logs, prefetch, amcache, and more. You can triage thousands of endpoints simultaneously.

Check out Eric's video below for a walk-through of deploying endpoint agents across an environment, finding evil, and collecting triage acquisitions.

WANT TO TRAIN WITH US?

We would love to train you and your team! Find more information about our offerings here, and drop us a line.

We've built comprehensively realistic attack scenarios modeled after real-world threat actors that target industries just like yours.

NDR shows teams how to take a structured approach to incident response. Additionally, it trains in effective team dynamics, such as delegation, communication, and documentation. Even the best and the brightest SOC teams can level up their soft skills while staying sharp on threat hunting.

BUT WAIT! THERE'S MORE!

If you want to learn more about Velociraptor, go check out their docs, or check out their awesome training offerings. They have a public Discord available as well.

We thrive on giving back to a community that has provided us with so much of what we use and rely on. Everything we've open sourced can be found in our GitHub, @ReconInfoSec.