Some of you may remember our last event, Camp COVID. That was the biggest event we had ever run.

UNTIL LAST WEEK: DEF CON 28

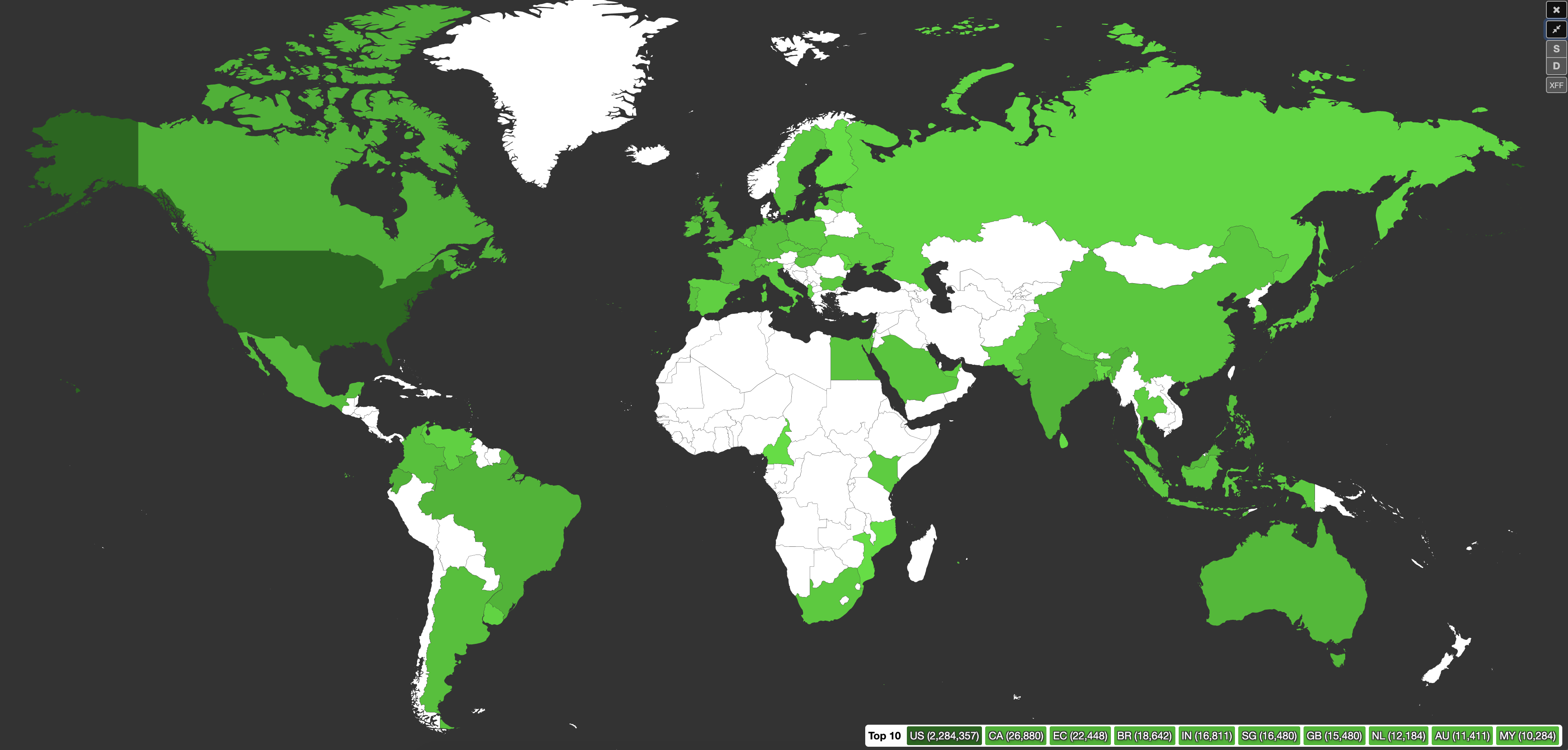

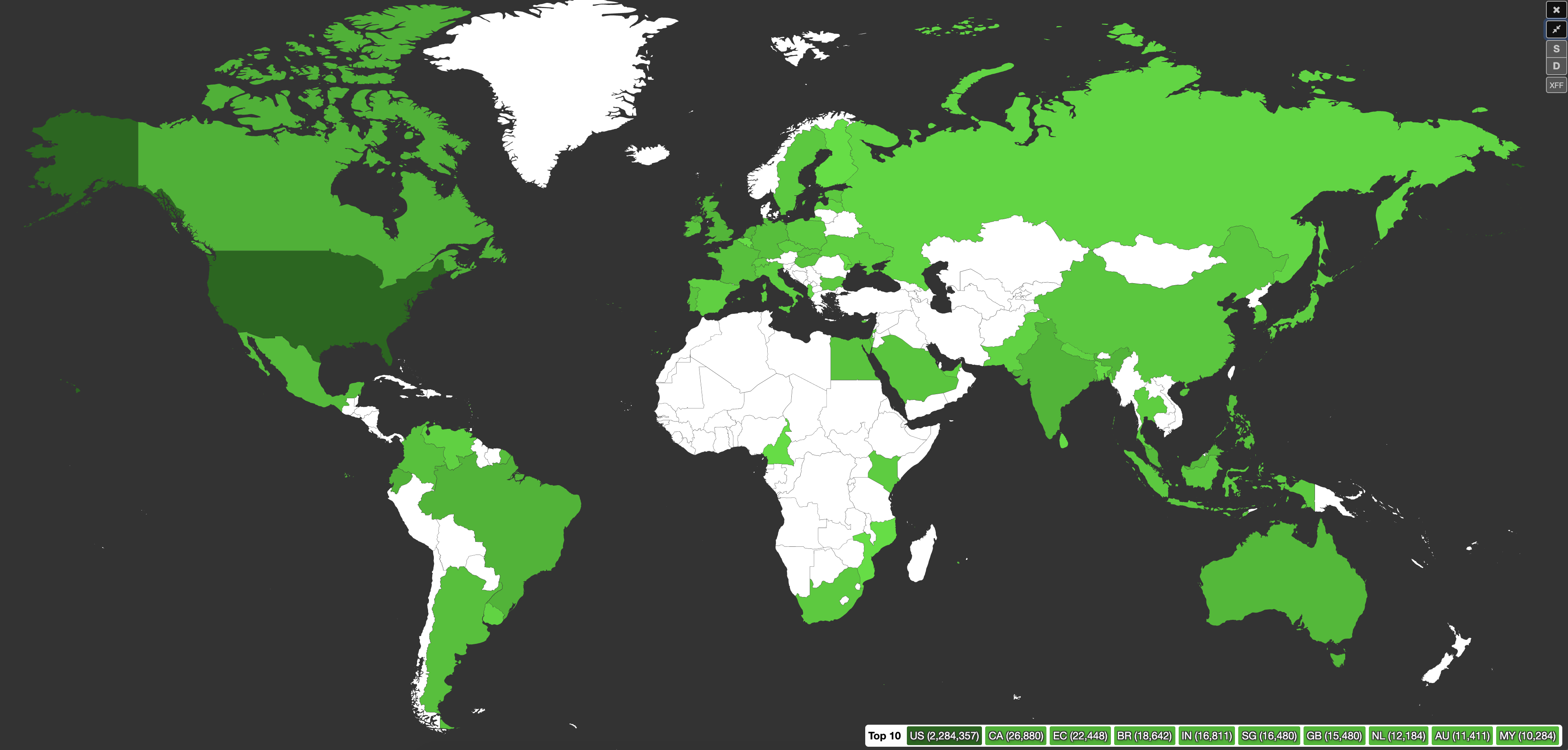

The stats speak for themselves... and so does the participant map above :)

Stats

8M Graylog queries

91K+ scoreboard submissions

800+ participants

500+ challenges

350+ teams

260GB+ PCAPs

150GB+ endpoint telemetry

10K+ osquery queries

20+ hours of content

GLOBAL participation: AGAIN. this was epic.

A remote DEF CON meant people from everywhere were jumping in, and we're stoked that we were able to have that kind of reach and participation.

Format

Since DEF CON is a 3 day event for us, we've had luck in the last 2 years running 2 days of a general round, with finals on Sundays. In this case, we let the top 20 teams compete in the Sunday finals.

We opted to not run overnight, similar to last year. Most of the team was toast, and after some discussion, I think going forward we will adhere to the con hours in order to remain at all functional.

We know this was a bit disheartening to players participating in other time zones, but we are a tiny team, and we need sleep too. Especially since we had just come straight out of 4 days of Black Hat, and an IR prior to that.

This was heightened even more by the fact that we had discord tickets coming in non stop (to get approved on our network, for help with challenges, for kicking off Velociraptor hunts, you name it). I fear that with less sleep, those tickets would've gotten a lot more colorful.

Discord

We decided to use discord this year. The con was already using it, BTV was using it, and we already use it internally for training events. SO. Naturally, it made sense to move OpenSOC events to it as well.

As I mentioned--there were tickets. Like 1500 of them. The YAGPDB bot handled all the things, and we had a process down for all of them coming in. It was just a matter of divide and conquer after that.

Easier said than done during some parts of the day(s), but we managed! :)

If you didn't get to make it to this OpenSOC event, you should probably just join our discord anyway and get in on our future events :) Just sayin.

ZeroTier

Similar to past years, we had everyone join our ZeroTier network in order to participate. This went really well, again, so kudos to ZeroTier for staying awesome.

One of the best parts of ZeroTier was that the ZeroTier ID associated to everyone we allowed in was tied to their discord ID (we'd know the IP either way, but this allowed us to follow up with folks in discord). SO, we could see every search/query/hunt/GET/POST/sneeze across the network tied to each participant.

Keep that in mind for future events, nerds.

Scenarios & Validation

We ran 10 scenarios that made up the 500+ challenges mentioned above.

That's 500 challenges, that needed...

- to be validated after playbooks were run

- to have validation queries solidified and tested

- answers found/double checked/triple checked in the scoreboard

- regex's tested

This team was on FIRE staying ahead of the participants, while continuing to kick off nefarious red team activities and support everyone in the game.

And what's even MORE impressive are the months of research and development before this event that went into creating the scenarios themselves. This team has turned that process into an art.

If you are unfamiliar with how our scenarios work, check out this break down on the oldest and longest running (now retired) scenario, "Urgent IT Update!!!". get all the nerdy details (read: awesome details) from Eric.

Infrastructure

I say this every time: like every event we run, we inevitably have hiccups along the way. huge live environment, lots of moving parts, hundreds of people beating it up--things happen.

BUT. It makes us unbelievably happy to be able to say...

That aside from the #!?@#$* scoreboard, EVERYTHING HELD UP THROUGHOUT THE ENTIRE EVENT <3 throughout the pounding and querying and F5-ing and abuse, all the things stayed happy 99% of the time.

Biggest lesson learned here: despite how much I thought was enough, throw EVEN MORE horsepower at Elastic (this weekend it was doubled from Camp COVID) when there are EVEN MORE hundreds of angry nerds creating insane queries and Kibana visualizations.

Elastic got a little angry for a minute due to some folks not playing nice (like the person tossing Splunk queries into elastic (WHY), or all the ridiculous bad regex), so we ended up taking away write access to Kibana, and pointed some folks at (even more) Lucene query docs. smooth sailing after that.

Scoreboard

This was pretty much our biggest pain point besides the headaches of data validation. Which, in the end, we'll take it!

Unlike last time, we did not have CTFd host our scoreboard--we ran it on a massive instance in AWS. We had a handful of reasons for this, the biggest reason being we needed to be able to put it behind ZeroTier. Another reason: plugins. another reason: the ability to view logs, which isn't an option on the enterprise version. couple of other things.

Anyway, no matter how much we tweaked Redis settings and allowed more connections, it continued to choke on itself and throw errors when it hadn't even hit 25% of that. Or even put a strain on the system. If it isn't obvious, our priorities were on literally everything else.

We are still working on our own internal solution so we no longer need to rely on a 3rd party product, and this event only further solidified that decision.

Good Vibes

We'd like to say thank you to folks who jumped in and mentored others throughout the entire event. And to everyone who volunteered.

There were several people who went out of their way to help other participants, whether it was with the challenges or just getting on the network. They spent hours that they could've spent doing literally anything else helping others troubleshoot and threat hunt.

These folks in particular:

- Milkman

- KelseyS

- snoopy

- DreadGod

- ashking91

There were also several teams comprised of total strangers prior to the event, and now they have a handful of new internet friends.

Just a few of the many reasons we love being part of this community is seeing that kind of collaboration come to life.

Congrats

And finally, huge kudos to our top teams!

And our top 3 solo players!

- kenlee

- Diz_Sec

- Milagros Coldiron

Scoreboard screenshots:

- Day 1 scoreboard: link

- Day 2 scoreboard: link

- Day 3 scoreboard, finals: link

Thank You

We hope you all had a great time. We love running OpenSOC--it is really a labor of love for this team, and it takes a lot of it.

We thrive on giving back to a community that has provided us with so much of what we use and rely on, so thank you for helping us continue to grow that. Especially during a year as crazy as this one.

Please join us at one of our next events. Follow us on Twitter to stay up to date, and also take a look at our other training offerings.